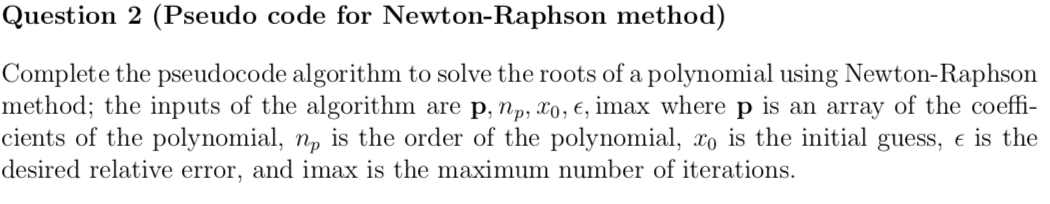

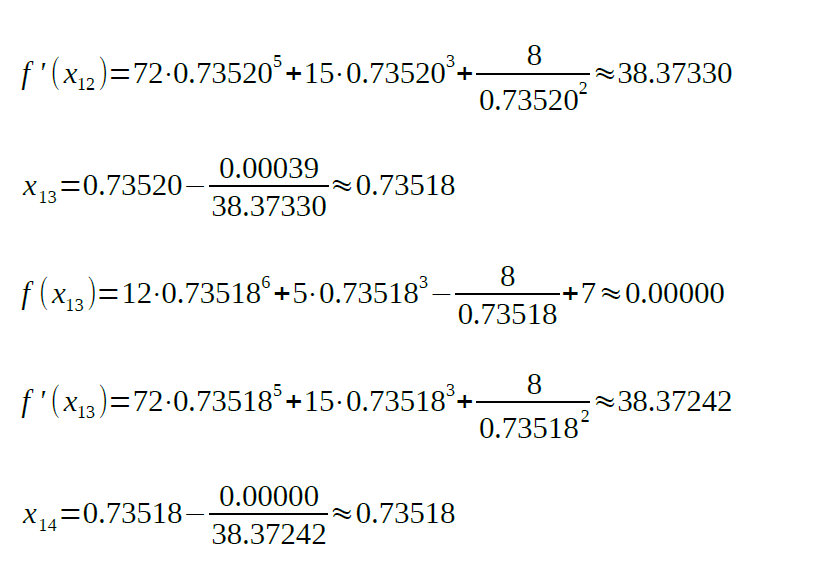

Using gradientAscent optimisation method. LOGISTIC REGRESSION USING NEWTON TERRMINATION RESULTSįinal weights: theta0:-25.51, theta1:+11.25, theta02:-11.283.įinished because cost function tolerance reached. Cost: +0.2190įinished because singular Hessian. The linear decision boundary shown in the figures results from setting the target variable to zero and rearranging equation (1). None, either or both LASSO (least absolute shrinkage and selection operator) Regression (L1) or Ridge Regression (L2) are implemented using the mixing parameter. Or the full Hessian is no longer invertible or the maximum number of iterations has been exceeded. The parameter iterative updates are calculated asĬonvergence is reached when either the tolerance level on the cost function has been reached Using either Gradient Ascent or Newton-Raphson methods.

To learn more about the dataset, click here.Īnd estimates are trained using optimisation of the conditional maximum Likelihood (cost) function Using the Iris dataset available in sklearn, which contains characteristics of 3 types of Iris plant and is a common dataset when experimenting with data analysis.Logistic regression implemented from scratch.Further can choose none/one/both of Ridge and LASSO regularisation. Python script to estimate coefficients for Logistic regression using either Gradient Ascent or Newton-Raphson optimisaiton algorithm.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed